Here in this article we will see Color vs Monochrome Sensor and what is the use of Bayer Filter in sensor and what colored sensors are made of. We will start with what is sensor and then move on to use of Bayer Filter with detailed explanation and will end this article with actual difference in Color and Monochrome sensor. So, lets start.

What is Sensor:

A sensor is a hardware device, a part of camera which captures lights, converts incoming signals into binary numerical value and stores it. It determines everything of an image from resolution to image size, low light performance, dynamic range, depth of field etc. While talking about monochrome camera sensors, these are capable of gathering more details and sensitivity than would otherwise never be possible with color/RGB sensor.

Photosites: The sensor array is made up of millions of individual tiny photosite. Each sensor has a specific number of tiny individual sensors, called photosite. Depending on the design of the sensor, a photosite or pixel may contain the necessary circuitry for a single colored pixel.

Virtually every digital sensor works by capturing light in an array of photosites (A sensor is made of millions photosites), similar to a big buckets which can store rain drops. When the exposure process starts, each photosite gets uncovered to collect incoming light. When the exposure ends, each photosite read as an electronic signal which is further quantified, processed and stored as numerical binary value in a image file.

However, the above photosites only measure the quantity of light. To achieve color, photosites also need a way to differentiate and record each values separately for each color.

Bayer Filter :

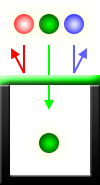

Bayer Filter is what transforms a monochrome sensor to a color sensor or RGB sensor. Its a additional layer which sits on top of monochrome sensor. Bayer Filter capture alternating red, green and blue colors at each photosite, and do so in a way that twice as many green photosites are recorded as either of the other two colors. Values from these photosites are then intelligently combined to produce full color pixels using a process called “demosaicing”

A high quality image requires a sensor that measures all of the following as accurately as possible:

- How much light is received

- The color of this light

- Precisely where this light hits the sensor.

Improving upon above three measurements brings better dynamic range, color accuracy and resolution. For example, using additional red and blue filters at the expense of green gives more color resolution, but less brightness resolution. Alternatively, photosites without color filters don’t discard any light or require demosaicing, but these only yield a monochrome image. An optimally-designed sensor is therefore one that provides the best compromise for a given task.

Color vs Monochrome Sensors :

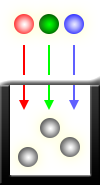

Now lets see Color vs Monochrome Sensors, how one is different from other in terms of implementation. Unlike color sensors, monochrome sensors capture all incoming light at each pixel regardless of color. Each pixel therefore receives up to 3X more light or 3X more exposure to a image, since red, green and blue are all absorbed:

This transforms 1.5x more details and light sensitivity than what RGB sensor does. Unlike with color, monochrome sensors also do not require demosaicing to create the final image—the values recorded at each photosite effectively just become the values at each tiny pixel. As a result, monochrome sensors are able to achieve a slightly higher resolution than RGB ones.

Another advantage is, image noise is lower at equivalent ISO speeds, and resolution is higher. Such improvements in image quality can be critical whole shooting in low light.

Advantages of Monochrome Sensor:

- Better low light performance with less noise.

- Higher Resolution image with superior quality compared to Color Sensor

- Helps to capture breathtaking B/W shots with good contrast and better exposure due to dedicated monochrome sensor.

RGB or COLOR Sensor:

A necessary but undesirable side-effect of color filter array is that each pixel effectively captures only 1/3 of incoming light, since any color not matching the pattern is filtered out. Any red or blue light that hits a green pixel won’t be recorded, for example.

These work by capturing only one of several primary colors at each photosite in an alternating pattern, using something called a “color filter array” (CFA), which uses alternating rows of red-green and green-blue filters.

FINAL SAY:

Hope I made it clear why these days mobile company uses monochrome sensor in dual camera setup to improve image quality. They keep secondary camera with monochrome sensor and then combines both images (RGB + Monochrome) to get the better output. It also helps to capture some breathtaking B/W shots with good contrast and better exposure due to dedicated monochrome sensor. The quality of this B/W shot is way better than the one you will get with editing software.

Did you enjoy this article? Please share it! 🙂

Hello,

Nice article 🙂 Couple of points to note:

1. MI A1 does not have a wide angle lens (in comparison to its telephoto, yes). the true wide-angle sensor phone is produced by LG for its LG G6 and V20/30 series and one by Motorola.

2. Cameras having monochrome sensors are still not producing the result which we expect them to produce. most of the time they are falling behind single lens shooters in all budget range. B/W shots are breathtaking, yes but still that’s a niche category and most people are perfectly fine with a color sensor. monochrome sensors with dual lens setup in mobile phones will be obsolete soon.

Thanks Rajdeep for the feedback.

yes MI A1 does not have so called wide angle lens but if you keep budget in mind and it has one telephoto lens as well then you have to consider other one as wide angle.. no rule 🙂

For second point what i feel, as phones doesnt have much flexibility to introduce big telephoto lenses so instead of that currently most of the companies are adopting for monochrome and rgb sensor dual setup to make picture more sharp. Obviously this will not stay for long term in mobile industry.. it inevitable.